AI Tools

AI ToolsAider

AI pair programming in your terminal—free, open-source, any LLM

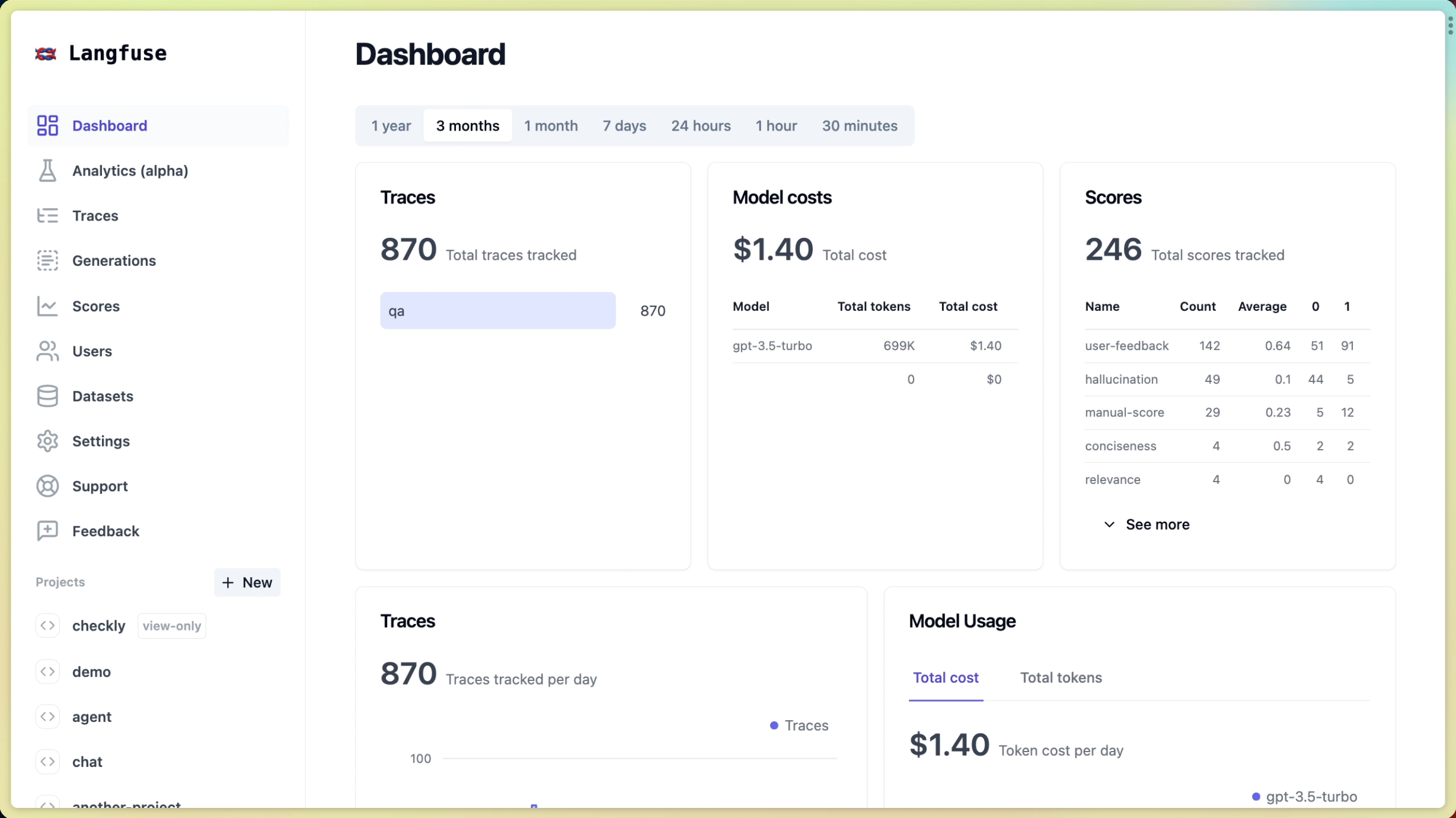

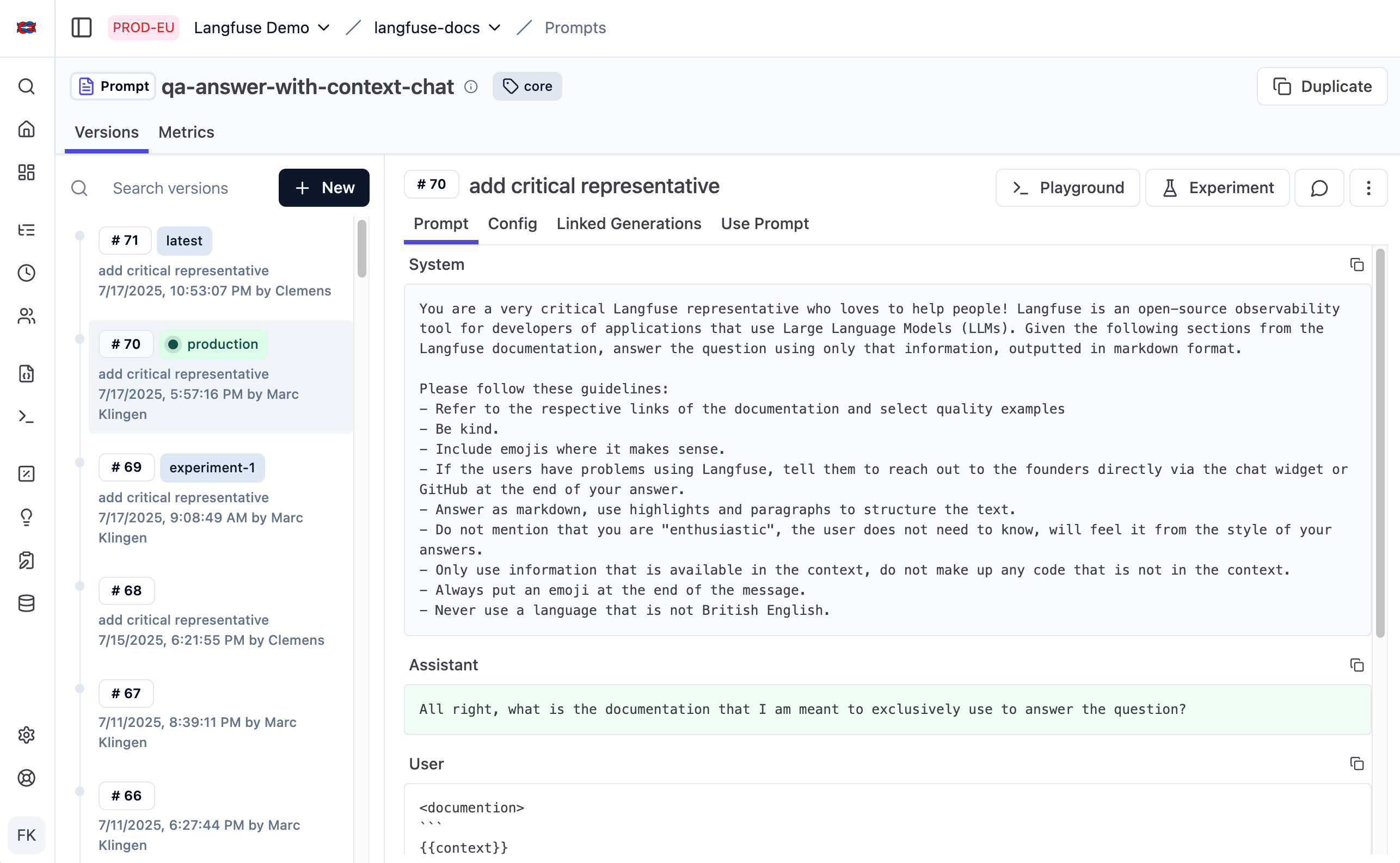

Langfuse is the leading open-source LLM engineering platform, now backed by ClickHouse, that gives AI teams full visibility into their language model pipelines through tracing, evaluations, prompt management, and cost analytics. Trusted by 63 of the Fortune 500, it's the go-to observability tool for teams shipping production LLM applications.

Langfuse is an open-source LLM engineering platform providing traces, evaluations, prompt management, and cost analytics for production AI applications. We rate it 85/100 — an outstanding choice for any team building with LLMs who needs deep visibility into model behavior, costs, and quality without vendor lock-in.

Founded in 2022 by Maximilian Deichmann, Marc Klingen, and Clemens Rawert — alumni of Y Combinator's W23 batch — Langfuse was born just as GPT-4 was released, directly addressing the void in LLM observability tooling. The Berlin-based team built what has become the most widely-deployed open-source solution in the LLMOps space: 24,600+ GitHub stars, 26M+ SDK installs per month, and adoption across 63 Fortune 500 companies by early 2026.

In January 2026, Langfuse was acquired by ClickHouse as part of a $400M Series D round that tripled ClickHouse's valuation to $15B. The platform remains 100% open-source under the MIT license, and Langfuse Cloud continues operating as a standalone service. The acquisition strengthens Langfuse's long-term infrastructure — Langfuse Cloud has always been built entirely on ClickHouse under the hood.

Sentiment across Reddit, Hacker News, and Product Hunt is overwhelmingly positive, with Langfuse consistently called "the observability tool the LLM space actually needed." On Hacker News, the Launch HN thread drew praise specifically for the self-hosting story: "Finally something I can run in my own infra without a per-seat license that costs more than my model inference bill." A recurring theme in Reddit's r/MachineLearning and r/LangChain is that Langfuse is the only tool that makes agent debugging tractable — the nested trace view for multi-step chains is cited as a genuine differentiator over alternatives like LangSmith.

The most common complaint is that the free Cloud tier's 30-day data retention is limiting for teams that want longer-term trend analysis without immediately upgrading. Some self-hosters also note that initial Kubernetes deployment requires some ClickHouse configuration knowledge. That said, reviewers consistently praise the speed of the team in responding to issues and shipping features.

Langfuse Cloud uses a usage-based freemium model. The free tier is genuinely useful — 50,000 events/month with 2 users is substantially more generous than most competitors. Paid tiers scale on event volume and unlock longer data retention and compliance features rather than gating core functionality. Self-hosting is always free under the MIT license.

| Plan | Price | Key Limits |

|---|---|---|

| Hobby (Free) | $0/month | 50,000 events/mo, 2 users, 30-day retention |

| Pro | $29/month | 100,000 events/mo, unlimited users, 90-day retention; +$8/mo per additional 100k events |

| Team | Contact | Custom event volume, SSO, SAML, SLA, priority support |

| Self-Hosted | Free | MIT license, unlimited events, full feature set, runs on ClickHouse |

Best for: ML engineers and full-stack developers shipping production LLM applications — especially those building RAG systems, agents, or multi-step pipelines where tracing individual components is critical. Teams that are cost-conscious and want observability without per-seat pricing will find the unlimited-user model across all paid plans highly attractive. Companies with strict data residency requirements will appreciate the robust self-hosting story.

Not ideal for: Teams that only call a single LLM endpoint with no orchestration — simpler tools like basic logging may suffice. Enterprises needing fully on-prem deployment with dedicated support may want to evaluate the Team tier carefully before committing.

Pros:

Cons:

LangSmith (by LangChain) is the most direct competitor — tighter LangChain integration but closed-source, per-seat pricing, and no self-hosting option. Helicone is a lighter-weight alternative focused purely on OpenAI API logging with a simpler setup, but lacks Langfuse's eval framework and prompt management depth. Arize Phoenix is a strong open-source option for ML teams already in the Arize ecosystem, with particularly good support for multimodal and embedding observability.

Langfuse is the best observability platform for LLM applications available today, full stop. The combination of deep tracing, a production-grade eval framework, prompt versioning, and cost analytics — all in a single open-source tool that you can self-host for free — is unmatched. The ClickHouse acquisition adds long-term infrastructure confidence. We rate it 85/100: the minor deductions are for the restrictive free-tier retention window and the ClickHouse knowledge prerequisite for large-scale self-hosting. For any team shipping production AI, Langfuse should be the first tool you instrument.

ServiceNow and Accenture Launch Forward Deployed Engineering Program to Scale Agentic AI in the Enterprise (May 6, 2026)

At Knowledge 2026, ServiceNow and Accenture announced a joint forward deployed engineering program that drops co-located engineer pods into customer environments to ship agentic AI workflows natively on the ServiceNow AI Platform — with access to 300+ pre-built agent skills and the AI Control Tower as the governance backbone.

May 7, 2026

ReFiBuy Raises $13.6M Seed to Help Brands Get Recommended by AI Shopping Agents (May 5, 2026)

ReFiBuy, the Raleigh-based agentic commerce platform from ChannelAdvisor founder Scot Wingo, closed an oversubscribed $13.6M seed led by NewRoad Capital Partners on May 5, 2026 — betting that the next billion-dollar e-commerce moat is being chosen by ChatGPT, Claude and Perplexity.

May 7, 2026

OpenAI Replaces ChatGPT's Default Model With GPT-5.5 Instant — 52.5% Fewer Hallucinations, 30% Shorter Answers (May 5, 2026)

OpenAI on May 5 swapped GPT-5.3 Instant for the new GPT-5.5 Instant as ChatGPT's default model, claiming 52.5% fewer hallucinated claims on high-stakes prompts and 30% more concise answers. The model also rolls into the API as chat-latest and adds personalization from Gmail and past chats for Plus and Pro web users.

May 7, 2026

Is this product worth it?

Built With

Compare with other tools

Open Comparison Tool →