Qwen 3.6-Plus Debuts on Fireworks AI in Landmark Alibaba Partnership (May 1, 2026)

Alibaba's flagship closed-weights model Qwen 3.6-Plus is live on Fireworks AI's serverless inference platform — the first time a top-tier Qwen Plus model has been hosted by an outside U.S. provider. 1M-token context, 78.8 on SWE-bench Verified, $0.50/$3 per million tokens.

Alibaba's Qwen team and U.S. inference platform Fireworks AI announced a strategic partnership on that brings Alibaba's flagship closed-weights model, Qwen 3.6-Plus, to Fireworks' serverless inference platform — the first time Qwen's top-tier proprietary model has been hosted by an outside provider.

What Happened

The announcement, posted by Alibaba Qwen on X and confirmed on Alibaba Cloud's official channels, calls Qwen 3.6-Plus a "landmark collaboration" that will bring the model to global developers via Fireworks AI's optimised serving stack. Qwen 3.6-Plus is now live as a serverless model on fireworks.ai for both inference and fine-tuning, priced at $0.50 per 1M input tokens and $3.00 per 1M output tokens. Until this week, the only way to access Qwen 3.6-Plus outside China was through Alibaba's own Model Studio API.

Key Details

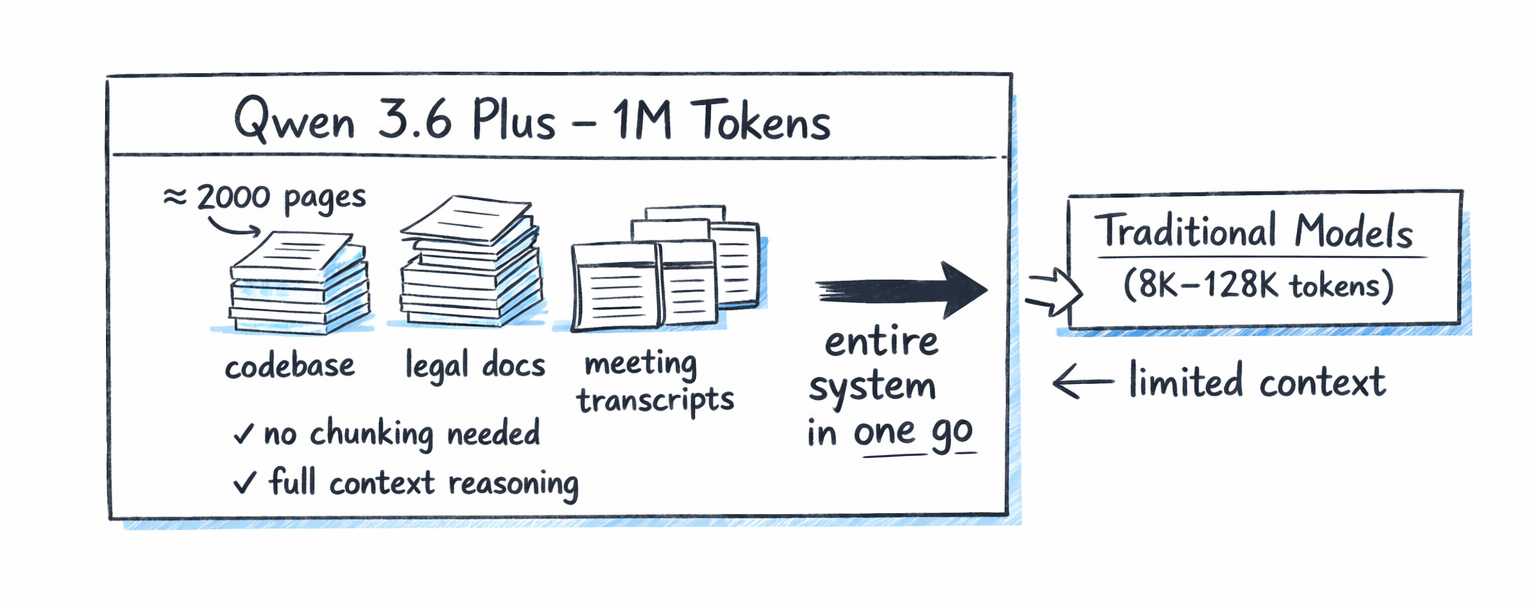

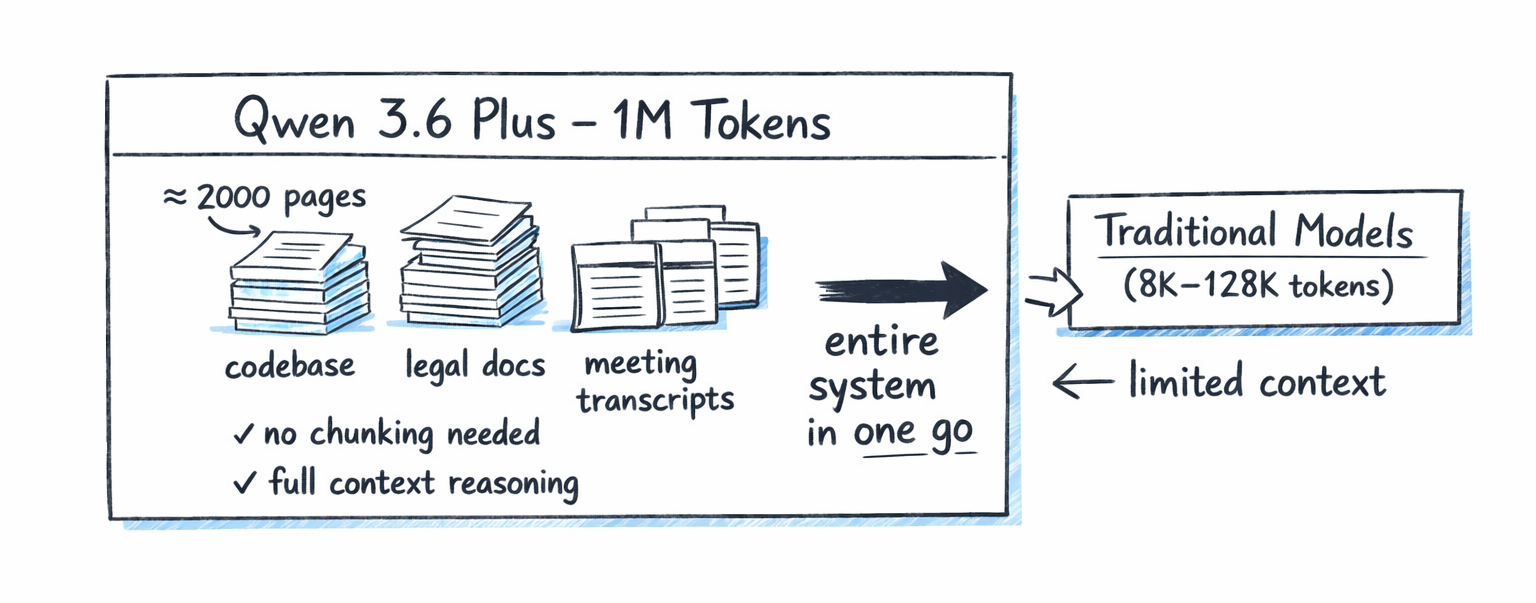

- 1M-token context window — Qwen 3.6-Plus expands from the previous 262K window in Qwen 3.5-Plus, allowing entire codebases to be passed without chunking.

- SWE-bench Verified: 78.8 — Qwen says the model edges out Anthropic's Claude Opus 4.5 (76.8) on the agentic coding benchmark, and scores 61.6 on Terminal-Bench 2.0.

- Always-on chain-of-thought — unlike Qwen 3.5's optional thinking mode, 3.6-Plus reasons before every response, with up to 65,536 output tokens per turn.

- Pricing parity — at $0.50/$3.00 per million tokens, Fireworks matches Alibaba Cloud's published rate, undercutting Claude Opus 4.5 ($15/$75) by roughly 25–30×.

- Fine-tuning support — Fireworks customers can fine-tune Qwen 3.6-Plus on their own data, a capability Alibaba does not offer publicly through Model Studio.

What Developers Are Saying

Reaction on Hacker News and r/LocalLLaMA has been mixed. The praise: a top-of-the-leaderboard model at near-open-source pricing is a forcing function for OpenAI and Anthropic, and Western developers can finally route Qwen workloads to a U.S.-hosted API without a Beijing round trip. The criticism: 3.6-Plus is closed-weights, so the partnership does not extend the open ecosystem in the way the recently released Qwen3.6-35B-A3B and Qwen3.6-27B open-weight checkpoints do. Several developers on X also noted that the U.S. and global rollout is described as "coming soon," with full availability staggered across regions.

What This Means for Developers

For teams that already build on Fireworks for serverless inference, Qwen 3.6-Plus is now a drop-in option alongside Llama, DeepSeek, Mixtral and the open-weight Qwen3 models. The 1M-token window and 78.8 SWE-bench score put it in direct competition with Claude Opus 4.5 and GPT-5.5 for agentic coding, RAG over large repositories, and long-context document workflows — at a fraction of the price. Existing Alibaba Model Studio users do not need to migrate, but Fireworks adds U.S. data residency, OpenAI-compatible APIs, and on-platform fine-tuning that Alibaba's hosted endpoint lacks.

What's Next

Alibaba's Qwen team has signalled that additional Qwen models — including the multimodal Qwen3-VL family — will follow on Fireworks in the coming months. Developers can request access to the U.S. and global preview directly from the Qwen 3.6-Plus model card. Pricing, context limits, and rate limits are expected to evolve as the partnership exits preview.

Sources

- Alibaba Qwen on X — official partnership announcement

- Fireworks AI: Qwen 3.6-Plus serverless model card — primary product listing and pricing

- Qwen 3.6-Plus: Towards Real World Agents — Alibaba's official model release post

- Artificial Analysis: Qwen 3.6 Plus benchmarks and provider comparison

- Vercel AI Gateway: Qwen 3.6 Plus specs — secondary distribution

- Build Fast with AI: Qwen 3.6 Plus Preview Review — independent benchmarks and capability tests

Stay up to date with Doolpa

Subscribe to Newsletter →