AI Tools

AI ToolsAider

AI pair programming in your terminal—free, open-source, any LLM

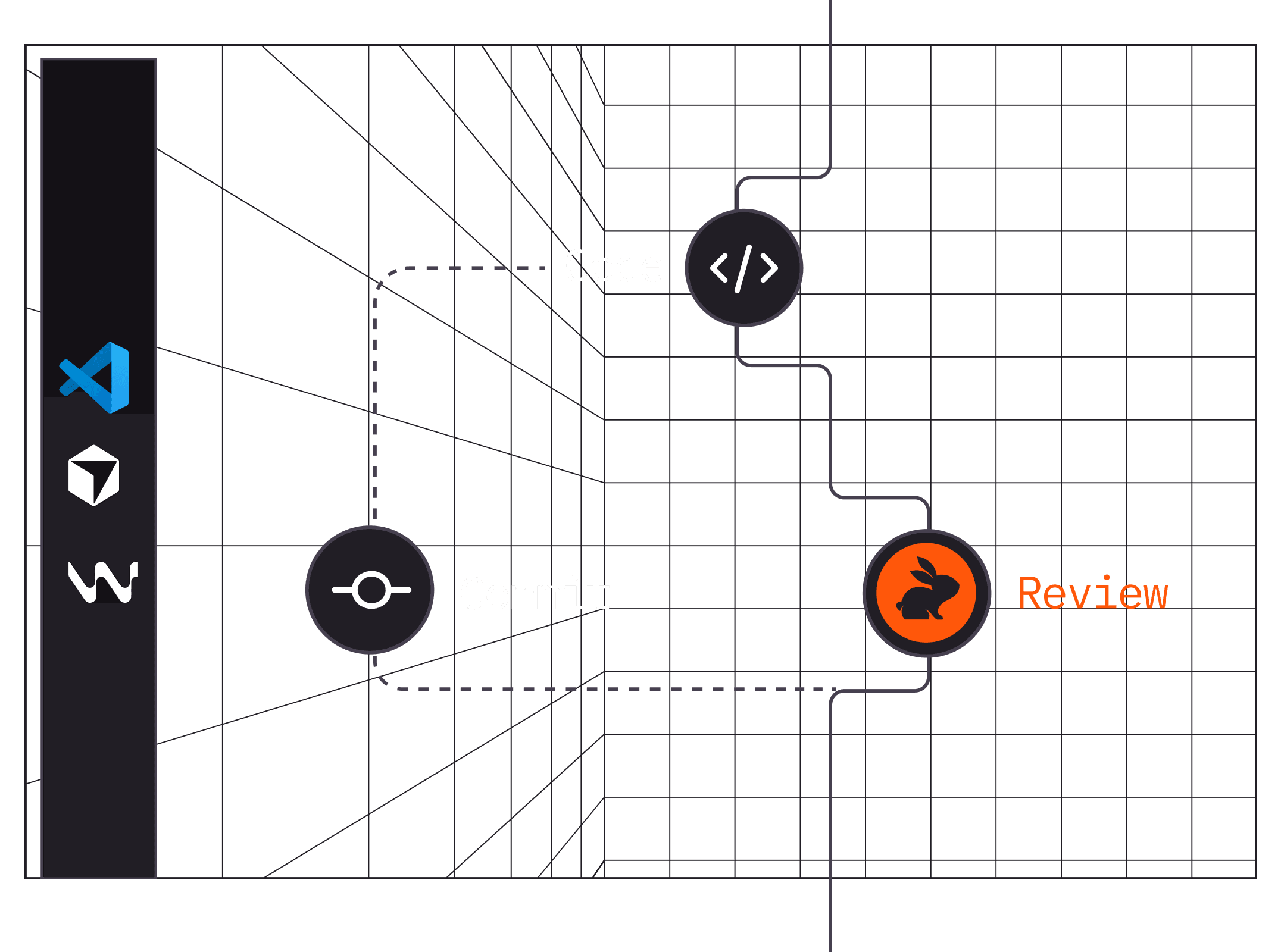

CodeRabbit is an AI code review agent that reads pull requests, catches bugs, and writes inline suggestions across GitHub, GitLab, Azure DevOps, and Bitbucket. It is the most widely deployed dedicated AI code reviewer in 2026, but noise and limited architectural analysis are real trade-offs.

CodeRabbit is an AI code review agent that sits inside your pull requests, IDE, and CLI and gives line-by-line feedback the moment code is pushed. We rate it 78/100 — easily the most complete dedicated AI code reviewer on the market in 2026, excellent for first-pass review and bug catching, but not a replacement for a senior engineer on architectural decisions.

CodeRabbit was founded in early by CEO Harjot Gill (co-founder of Nirmata, acquired by Cisco), Gur-Eesh Kohli, and Vishu Kaur. The product quietly opened to the public on on Product Hunt, where its V2 launch in picked up 558+ upvotes and landed the #1 Product of the Day slot.

The bet was simple: most bugs that make it to production are caught by humans in PR review, not by CI, so automate the reviewer rather than the tests. That bet has paid off. As of early 2026, CodeRabbit is used across 15,000+ paying customers, has analyzed 3 million+ repositories, flagged 75 million+ defects, and is, by GitHub Marketplace install counts, the most installed AI app on GitHub. Customers include NVIDIA — CEO Jensen Huang called out on stage that "we're using CodeRabbit all over NVIDIA" — plus Chegg, Groupon, Life360, and Mercury.

Funding followed. CodeRabbit closed a $16M Series A led by CRV in , then a $60M Series B led by Scale Venture Partners with NVIDIA's NVentures in at a $550M valuation, bringing total funding to $88M.

.coderabbit.yaml file lets teams encode house rules, path-specific policies, and which review categories to silence.

The G2 and Gartner Peer Insights reviews are broadly positive. Users consistently praise three things: setup time ("installed and working on our main repo in under five minutes"), the quality of line-level suggestions, and the fact that it catches copy-paste mistakes and forgotten error handling that exhausted reviewers miss on a Friday afternoon. Industry-side numbers claim a 50%+ reduction in manual review effort and 80% faster review cycles.

The complaints are real and recurring. On r/ExperiencedDevs and Hacker News, the top-voted critique is noise: out-of-the-box CodeRabbit comments on almost everything and can generate 20+ inline suggestions on a medium PR, which trains teams to ignore it. The second recurring complaint is weak architectural analysis — CodeRabbit is great at "this function has a null deref" but rarely catches "this service boundary is wrong." A third complaint, noted in a detailed 2026 review at UC Strategies, is customer support — multiple users report that the support chat routes to an email form with slow response times. Finally, the pricing model (per-contributor, not per-reviewer) irritates teams with many committers who rarely write code: "I'd rather pay for reviewer seats" is the most upvoted pricing gripe on G2.

CodeRabbit bills annually per developer who creates pull requests — seats are reassignable.

| Plan | Price | Key Limits |

|---|---|---|

| Free | $0/user/month | Unlimited public + private repos, PR summarization, IDE reviews, 14-day Pro trial (no credit card) |

| Pro | $24/user/month (billed $288/year) | Agentic chat, Jira & Linear, linters & SAST, 5 MCP connections, 5 reviews/hour, docstrings |

| Pro Plus | $48/user/month (billed $576/year) | All Pro features + custom pre-merge checks, issue planner, 15 MCP connections, 10 reviews/hour, unit test generation |

| Enterprise | Custom (contact sales) | SSO, RBAC, audit logs, API, self-hosting, multi-org, SLAs, dedicated CSM |

A Startup Program offers steep discounts for pre-Series-A companies, and the new free IDE tier means individual developers can use CodeRabbit on uncommitted changes forever at $0.

Best for: Teams of 5–200 engineers on any Git platform that already practice PR review and want to automate the boring half — style, null-checks, security, lint. Especially strong for mixed-language monorepos and for teams on GitLab, Bitbucket, or Azure DevOps who can't use Copilot Code Review.

Not ideal for: Solo developers on private repos (the free IDE tier covers them), teams looking for deep architectural critique (pair with a senior human), or cost-sensitive open-source maintainers (GitHub Actions-based alternatives like ai-pr-reviewer — ironically CodeRabbit's own open-source repo — or Qodo Merge may be cheaper).

Pros:

Cons:

.coderabbit.yaml tuning within the first week or the team starts ignoring it.The closest rivals are Qodo (formerly Codium) — the stronger open-source option with a generous free tier but GitHub-first; Graphite Reviewer — beautiful UI but GitHub-only; GitHub Copilot Code Review — cheap if you already pay for Copilot Business but shallow; and Cursor BugBot — tightly integrated with Cursor IDE but limited platform support. For background on the broader AI coding space, see our review of Bolt.new and Windsurf.

At $24/contributor/month, CodeRabbit is worth it if your team already does PR review and ships to multiple Git platforms. The time saved on first-pass review pays for itself inside one sprint for any team of 5+ engineers — this is why 15,000+ paying customers, including NVIDIA, run it in production. It is not a substitute for senior human reviewers on architecture, and you will need to tune .coderabbit.yaml in week one to suppress noise. But as a reviewer-assistant, it is the category leader in 2026 and the only serious choice for teams on GitLab, Azure DevOps, or Bitbucket. Our score: 78/100.

AI Tools

AI ToolsAI pair programming in your terminal—free, open-source, any LLM

AI Tools

AI ToolsOpen-source Python web crawler for LLMs, RAG and AI agents

AI Tools

AI ToolsOpen-source, extensible AI agent that goes beyond code suggestions — desktop app, CLI, and API for any LLM

AI Tools

AI ToolsAll-in-one open-source AI app to chat with your docs, run agents, and connect any LLM — local-first.

ServiceNow and Accenture Launch Forward Deployed Engineering Program to Scale Agentic AI in the Enterprise (May 6, 2026)

At Knowledge 2026, ServiceNow and Accenture announced a joint forward deployed engineering program that drops co-located engineer pods into customer environments to ship agentic AI workflows natively on the ServiceNow AI Platform — with access to 300+ pre-built agent skills and the AI Control Tower as the governance backbone.

May 7, 2026

ReFiBuy Raises $13.6M Seed to Help Brands Get Recommended by AI Shopping Agents (May 5, 2026)

ReFiBuy, the Raleigh-based agentic commerce platform from ChannelAdvisor founder Scot Wingo, closed an oversubscribed $13.6M seed led by NewRoad Capital Partners on May 5, 2026 — betting that the next billion-dollar e-commerce moat is being chosen by ChatGPT, Claude and Perplexity.

May 7, 2026

OpenAI Replaces ChatGPT's Default Model With GPT-5.5 Instant — 52.5% Fewer Hallucinations, 30% Shorter Answers (May 5, 2026)

OpenAI on May 5 swapped GPT-5.3 Instant for the new GPT-5.5 Instant as ChatGPT's default model, claiming 52.5% fewer hallucinated claims on high-stakes prompts and 30% more concise answers. The model also rolls into the API as chat-latest and adds personalization from Gmail and past chats for Plus and Pro web users.

May 7, 2026

Is this product worth it?

Built With

Compare with other tools

Open Comparison Tool →