AI Tools

AI ToolsAider

AI pair programming in your terminal—free, open-source, any LLM

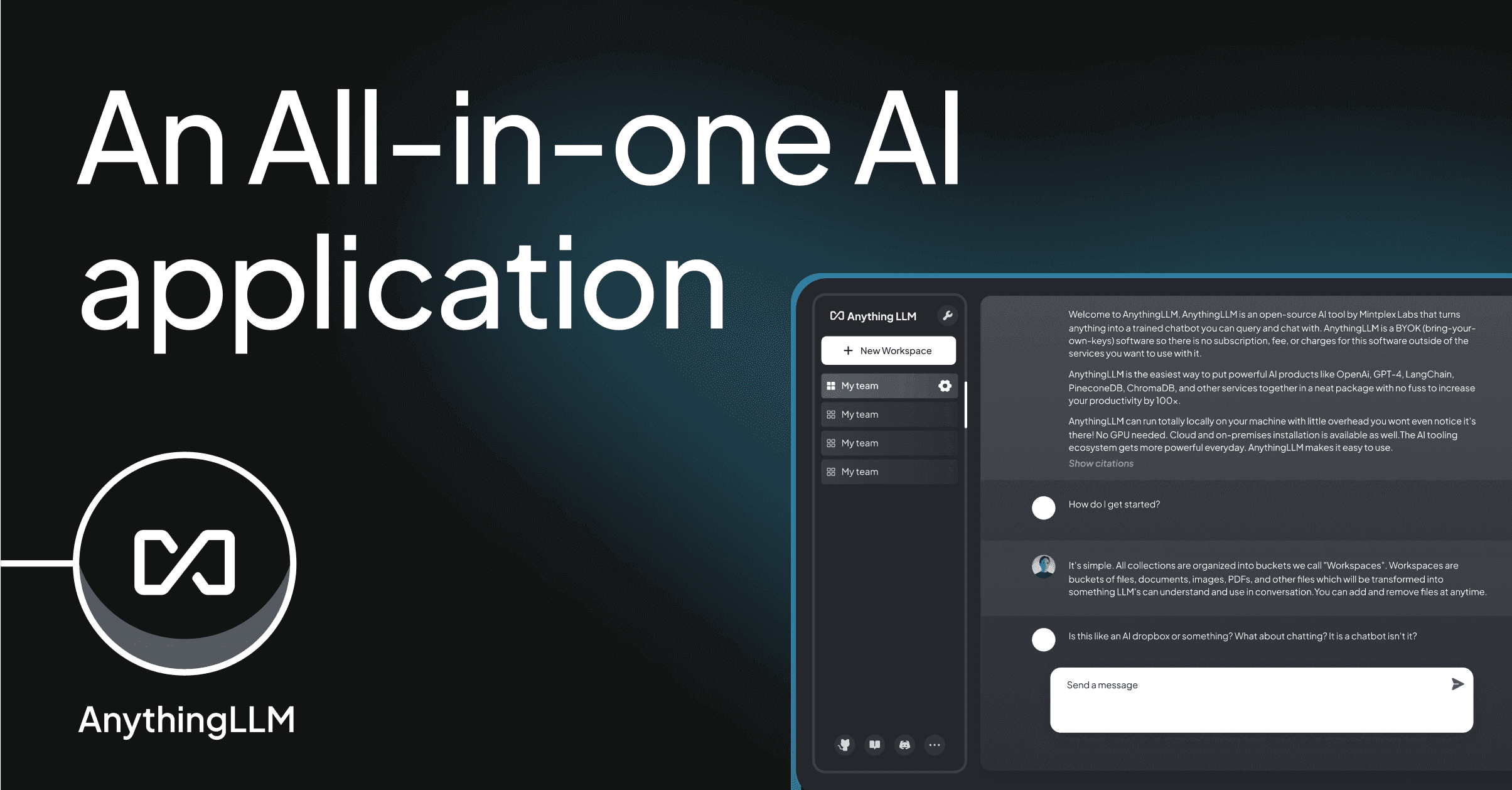

AnythingLLM is the MIT-licensed all-in-one AI app from Mintplex Labs that lets you chat with documents, run no-code agents, and plug in any LLM — locally on Mac/Windows/Linux or self-hosted via Docker, with a hosted Cloud option starting at $50/month.

AnythingLLM is the all-in-one open-source AI application from Mintplex Labs that turns any laptop or self-hosted server into a private ChatGPT — chat with your documents, run no-code AI agents, plug in any LLM (cloud or local), and ship a multi-user knowledge assistant in minutes. We rate it 88/100 — the right pick for privacy-focused teams, solo developers and self-hosters who want a polished RAG + agents stack under MIT, and the wrong pick only if you want a fully managed, no-API-key SaaS with consumer-grade polish on day one.

AnythingLLM was created by Tim Carambat and the Mintplex Labs team in . The first commit landed on June 4, 2023, and the project has since become one of the most-starred open-source AI apps on GitHub: as of , the repo at Mintplex-Labs/anything-llm sits at 59,074 stars, 6,381 forks and 191 contributors, with the latest release v1.12.1 shipped on April 22, 2026.

The pitch is simple: install AnythingLLM Desktop on Mac, Windows or Linux — or run the Docker image on a homelab, NAS or VPS — point it at your documents, pick any LLM provider (OpenAI, Anthropic, Ollama, LM Studio, OpenRouter, Groq, Gemini, Bedrock, Azure, Mistral, DeepSeek, and 25+ others), and you have a private chatbot with RAG, agents and a built-in API. Unlike ChatGPT or Claude, your documents never leave your machine unless you explicitly choose a cloud LLM provider, and the entire codebase is verifiably MIT-licensed.

Sentiment across r/LocalLLaMA, r/selfhosted and r/Ollama is broadly positive — the most upvoted threads describe AnythingLLM as the easiest way to get a usable RAG chat over personal documents without writing code, and frequently rank it ahead of LM Studio's built-in chat for document-heavy use cases. On Hacker News, commenters consistently praise the breadth of LLM provider integrations and the MIT license. Recurring complaints are real and worth knowing: large PDFs occasionally fail to chunk cleanly on the Desktop build (improving with each release), the Desktop app does not support multi-user (you must run the Docker server for that), the default LanceDB index can grow large on heavy libraries and benefits from periodic re-embedding, and the documentation occasionally lags new features by a release or two. Several users also note that hosted Cloud pricing is steeper than self-hosting — which is by design, since the Docker image is free.

AnythingLLM has three deployment modes: Desktop (free), Docker self-hosted (free), and managed Hosted Cloud. The MIT license applies to both Desktop and Docker editions equally, with no feature paywalls and no commercial-revenue threshold.

| Plan | Price | Includes |

|---|---|---|

| Desktop | $0 forever | Mac/Windows/Linux app, all LLM providers, RAG, agents, built-in API, local-only by default. Single-user. |

| Docker (self-hosted) | $0 forever | Everything in Desktop plus multi-user permissions, embeddable chat widget, admin controls, API keys. |

| Cloud Basic | $50 / month | Private hosted instance, custom subdomain, <5 users, <100 documents, RAG & agents, BYO LLM API key. |

| Cloud Pro | $99 / month | Larger private instance, more users and documents, 72-hour support SLA, faster compute. |

| Enterprise | Contact | On-premise install, custom domain, custom SLA, white-glove integration. |

You still pay LLM provider costs separately when using cloud models — AnythingLLM does not bundle inference. The cheapest realistic setup is Desktop + Ollama + a local model, which costs $0 indefinitely.

Best for: Privacy-conscious solo professionals (lawyers, researchers, analysts) who need a private "chat with my files" tool without sending documents to OpenAI; small teams that want a self-hosted alternative to ChatGPT Team or Notion AI; developers prototyping RAG and agent applications who want a polished UI before building their own; and anyone running a homelab who already has Ollama or LM Studio and wants a real workspace UI on top.

Not ideal for: Non-technical individuals who want a fully managed SaaS with no setup or API-key juggling — ChatGPT Plus or Claude Pro are smoother out of the box; and large enterprises that need SOC 2 + SSO + audit logs as table stakes — those land in the Cloud Pro / Enterprise tiers, where the pricing premium starts to compete with managed alternatives.

Pros:

Cons:

The closest open-source alternatives are Open WebUI — a great Ollama-first ChatGPT clone, but with weaker document-RAG ergonomics — and Dify, which is more focused on building production AI apps and APIs than on personal document chat. Jan is a strong Desktop-only competitor for purely local chat but lacks AnythingLLM's multi-user mode, agents and MCP support. On the closed-source side, NotebookLM is friendlier for casual users, while ChatGPT Team and Claude Projects offer cloud convenience at the cost of privacy.

Yes — if you want a private, all-in-one ChatGPT replacement that you control end-to-end, AnythingLLM is the strongest open-source pick on the market in 2026. The combination of MIT licensing, 25+ LLM providers, native MCP, agents, multi-user Docker mode and a polished Desktop client is genuinely unmatched in the self-hosted RAG category. Stick with Desktop or Docker for the best ROI; only consider Cloud Basic/Pro if you cannot run a server and need a managed instance with a custom subdomain. Our 88/100 reflects an excellent product with minor rough edges in PDF handling and a steeper-than-average learning curve on agents.

AI Tools

AI ToolsAI pair programming in your terminal—free, open-source, any LLM

AI Tools

AI ToolsThe most realistic AI voice generator and voice agents platform

AI Tools

AI ToolsOpen-source AI software engineer that resolves real GitHub issues — MIT-licensed, with a free cloud tier and a 77.6 SWE-bench score.

AI Tools

AI ToolsThe AI notepad for back-to-back meetings — bot-free capture, human-AI hybrid notes

Orkes Raises $60M Series B Led by AVP to Scale Production Agentic AI Orchestration (April 2026)

Netflix Conductor's commercial company Orkes has raised $60 million in Series B funding led by AVP, bringing total funding to ~$90M. The capital will scale Orkes' Agent Runtime, MCP Gateway and Prompt-to-Workflow products as enterprises move agents from prototype to production.

Apr 27, 2026

Tencent and Alibaba in Talks to Back DeepSeek's First-Ever Funding Round at $20B+ Valuation (April 2026)

Chinese AI lab DeepSeek is in talks to raise its first external round at a valuation north of $20 billion — double the target floated a week earlier — with Tencent and Alibaba both circling and Tencent reportedly pushing for a 20% stake.

Apr 27, 2026

Beehiiv Launches Webinars, AI Analytics, Metered Paywalls — Hits $28M ARR (April 2026)

Beehiiv on April 23, 2026 announced webinars for up to 10,000 attendees, AI podcast analytics, metered paywalls, and paid trials — the same week the New York creator platform crossed $28M in ARR and 50,000 active users.

Apr 27, 2026

Is this product worth it?

Built With

Compare with other tools

Open Comparison Tool →