AI Tools

AI ToolsAider

AI pair programming in your terminal—free, open-source, any LLM

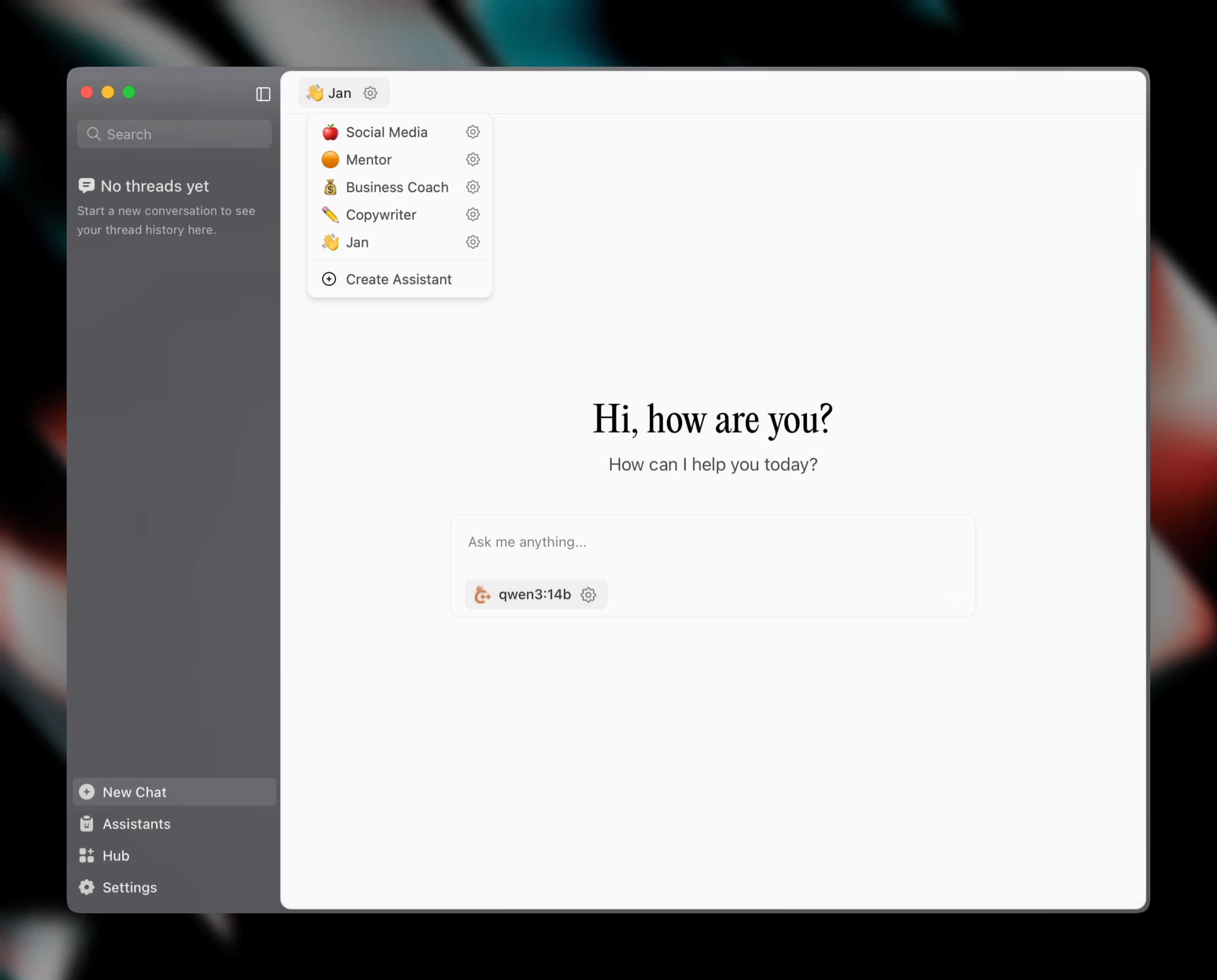

Jan is an open-source desktop app that runs Llama, Qwen, DeepSeek and other LLMs locally with full privacy — or routes them to cloud providers through a single ChatGPT-style UI. Free, self-hostable, Apache 2.0.

Jan is an open-source ChatGPT alternative that runs large language models 100% offline on your own computer, while letting you optionally connect to cloud models like GPT-5.5, Claude, Gemini, Mistral and DeepSeek from the same interface. We rate it 83/100 — the cleanest, most polished local LLM desktop app available right now, and a serious contender against LM Studio for anyone who values open source and privacy.

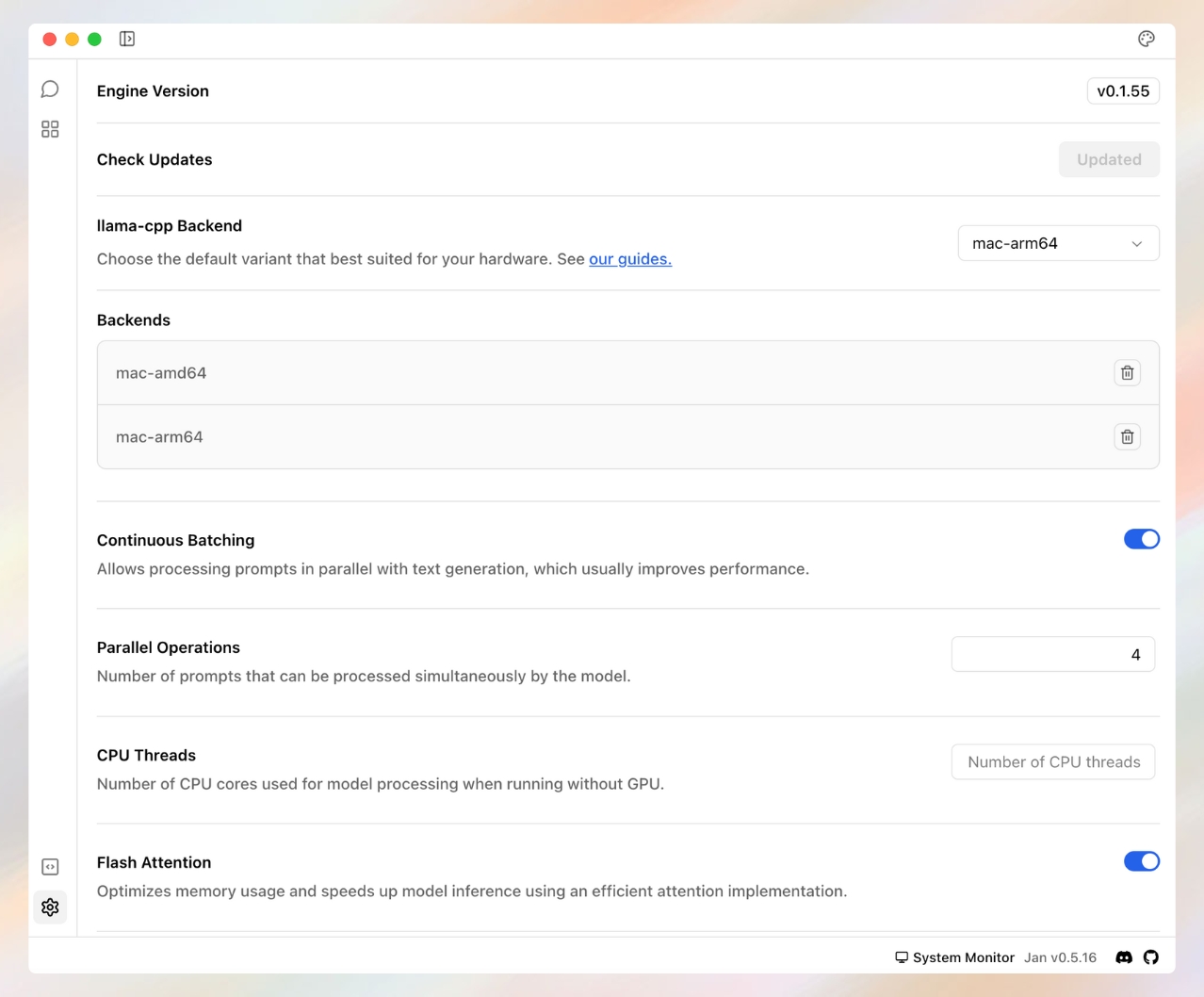

Jan is a privacy-first desktop application built by Menlo Research that ships under an Apache 2.0 license. The project first appeared on GitHub in and has grown to 42,000+ GitHub stars and over 5 million downloads across macOS, Windows and Linux as of . It uses llama.cpp and Apple MLX as its inference backends, wrapped in a Tauri-based UI that feels closer to ChatGPT than the developer-tool aesthetic of Ollama or Text Generation WebUI.

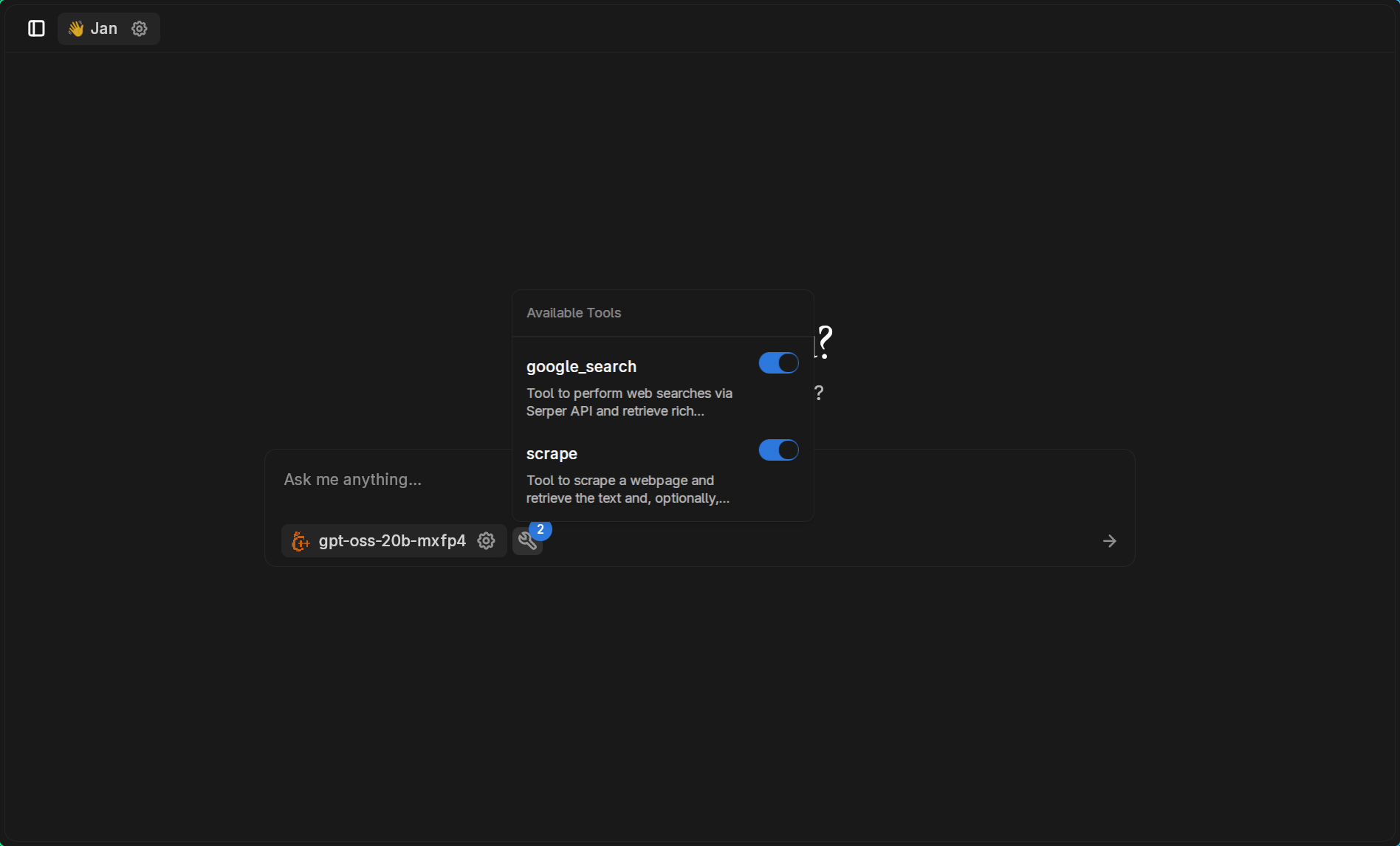

The pitch is simple: download the app, pick a model from a curated list (or paste any HuggingFace GGUF link), and start chatting. Nothing leaves the device unless you explicitly add a cloud provider. For developers, Jan also exposes an OpenAI-compatible REST API at localhost:1337, which means you can drop it in as a private replacement for the OpenAI SDK in any existing project.

http://localhost:1337/v1 exposes the same chat-completions, models and embeddings endpoints as OpenAI. Drop-in replacement in code that already uses the OpenAI SDK.

On Reddit's r/LocalLLaMA, the most upvoted Jan threads consistently praise it as the best open-source choice for non-technical users — one user puts it as "my pick over LM Studio because it's actually open source." Reviewers on independent comparison sites like neurocanvas.net and promptquorum.com repeatedly call out the UI polish, the plugin system, and the cleaner model-management experience versus Ollama's CLI-first workflow.

The complaints are also consistent and worth knowing. Several Reddit threads report higher idle memory usage than LM Studio, occasional slowdowns when switching between very large models, and a documentation site that lags behind feature releases — the v0.6 UI revamp shipped weeks before the docs caught up. Apple Silicon users on M1/M2 with 8 GB RAM report Jan struggling with anything past a 7B-parameter model, which is a hardware reality more than a Jan-specific issue.

Jan is fully free and Apache 2.0 licensed. There is no Pro tier, no usage cap, no telemetry-driven plan upsell. The only money you'll spend is on cloud API usage if you choose to connect a paid provider — and those keys go directly to the provider, not to Jan.

| Plan | Price | Key Limits |

|---|---|---|

| Jan Desktop App | $0 | Unlimited local models, unlimited messages, no account required |

| Cloud Models (BYO Key) | Pay provider directly | Routed through your own OpenAI / Anthropic / Mistral / Groq keys |

| Source Code | Free (Apache 2.0) | Self-build, fork, redistribute — commercial use allowed |

The catch: you provide the hardware. A reasonable local experience needs at least 8 GB of RAM for 3B models, 16 GB for 7B, and 32 GB for anything in the 13B+ range. Apple Silicon delivers the best price-per-token by a wide margin in 2026.

Best for: Privacy-conscious users who want a ChatGPT-style experience without sending data to a cloud, developers building OpenAI-compatible apps that need an offline fallback, and teams in regulated industries (legal, healthcare, finance) where the model has to stay on-device. Apple Silicon owners get the most out of Jan thanks to MLX acceleration.

Not ideal for: Casual users on a base-model laptop with 8 GB RAM (the cloud version of ChatGPT will feel snappier), and developers who only want a CLI — Ollama or llamafile fit that use case better. If you need image generation, Jan supports image inputs but does not run diffusion models locally.

Pros:

Cons:

Ollama is the CLI-first counterpart — lighter footprint, no GUI by default, and the most popular pick for power users who want scripting. LM Studio has the most polished UI of any local LLM app and slightly better memory efficiency, but it is closed source — Jan's main differentiator. Open WebUI is the self-hosted browser-based alternative that pairs well with Ollama on a home server. llamafile is the easiest single-binary option but lacks Jan's plugin and MCP ecosystem.

If you want a ChatGPT-shaped app on your own laptop without sending data to OpenAI, Jan is the easiest way to get there in 2026. It has earned its 42k stars by being the best-balanced option between LM Studio's polish and Ollama's openness — and the Apache 2.0 license means it stays that way. Power users with strong CLI habits will still prefer Ollama, and 8 GB-RAM laptops will still struggle with 13B+ models, but for everyone else, Jan is the local LLM client to install first. We rate it 83/100.

AI Tools

AI ToolsAI pair programming in your terminal—free, open-source, any LLM

AI Tools

AI ToolsFree, open-source platform for running LLMs locally — privacy-first AI with zero cost

AI Tools

AI ToolsThe AI notepad for back-to-back meetings — bot-free capture, human-AI hybrid notes

AI Tools

AI ToolsThe self-hosted, offline AI chat interface — 133k stars, works with Ollama, OpenAI and every OSS model

Asahi Linux 7.0 Lands M3 Alpha Support, ProMotion VRR and 20% Idle Power Savings (April 2026)

Asahi Linux's April 26 progress report ships Linux 7.0 with alpha-quality M3 MacBook hardware support, working ProMotion Variable Refresh Rate, a 20% idle power reduction on Pro/Max/Ultra Macs, and the first new installer release in nearly two years.

Apr 26, 2026

Reliable Robotics Raises $160M Led by Nimble Partners, Pushing Valuation Toward $1B (April 2026)

Reliable Robotics, the autonomous-aircraft startup founded by ex-SpaceX flight software director Robert Rose, closed a $160 million round led by Nimble Partners on April 21, 2026, lifting total funding to $300 million and a valuation near $1 billion as it pursues the FAA's first commercial uncrewed-cargo certification on the Cessna 208 Caravan.

Apr 26, 2026

Meta to Cut 8,000 Jobs and Close 6,000 Open Roles as Superintelligence Labs Spending Tops $135B (April 2026)

Meta will cut roughly 10% of its global workforce — about 8,000 employees — and close 6,000 open roles starting May 20, 2026. The internal memo, sent April 23 by Chief People Officer Janelle Gale, ties the cuts to record AI capital spending of $115B–$135B for the year.

Apr 26, 2026

Is this product worth it?

Built With

Compare with other tools

Open Comparison Tool →