AI Tools

AI ToolsAider

AI pair programming in your terminal—free, open-source, any LLM

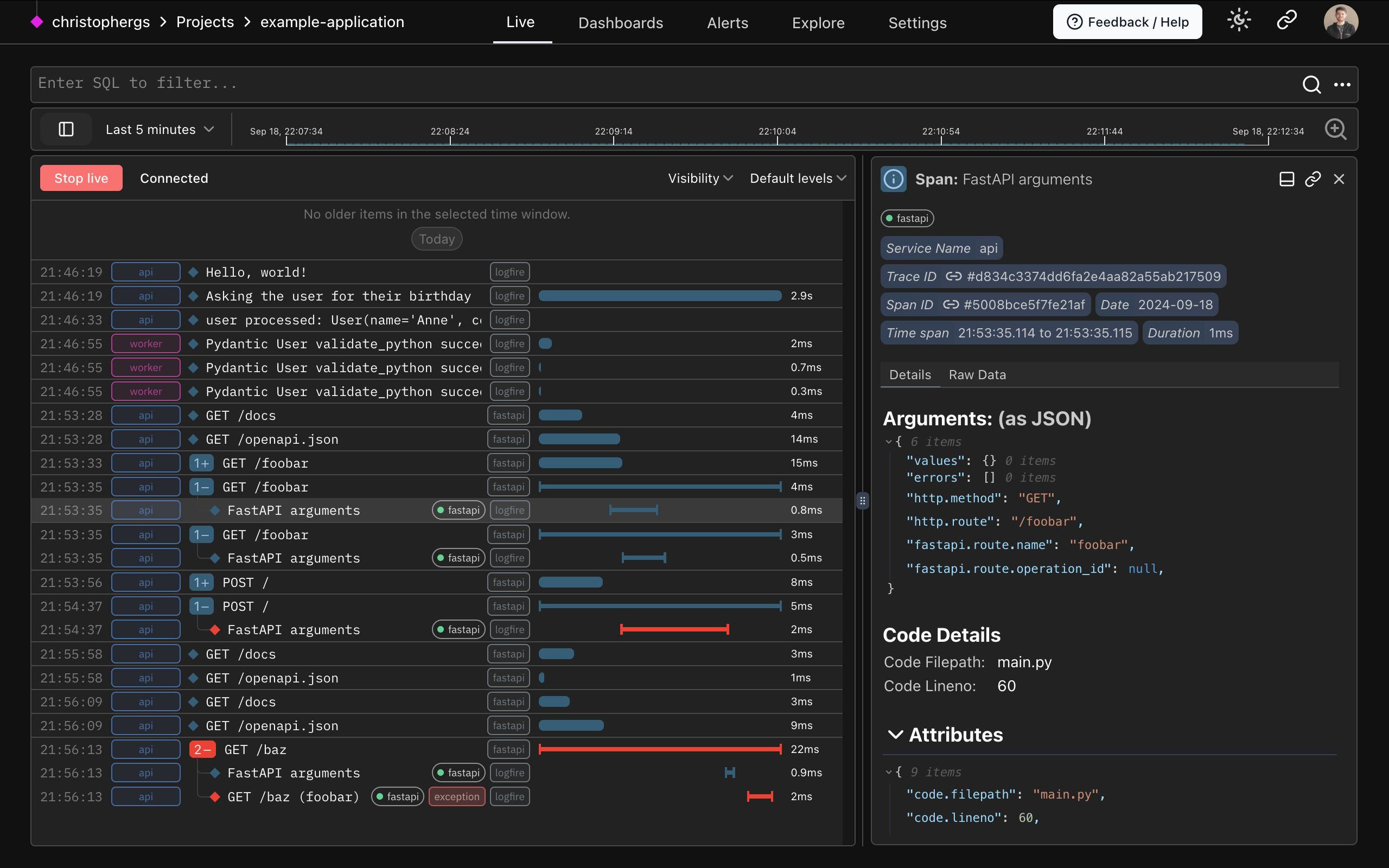

Pydantic Logfire is an AI-native observability platform from the Pydantic team. It speaks OpenTelemetry, exposes every span via Postgres-flavored SQL, and starts free with 10M records per month.

Pydantic Logfire is a production-grade observability platform purpose-built for Python and AI workloads, from the same team behind Pydantic Validation. We rate it 88/100 — the most natural choice for any Python team running LLM agents, FastAPI services, or Pydantic AI in production today.

Logfire is an opinionated observability layer on top of OpenTelemetry, founded by Samuel Colvin (creator of Pydantic) and the Pydantic team. It launched in public beta in , hit general availability later that year, and has since added SDKs for JavaScript/TypeScript and Rust alongside its first-class Python integration. The hosted backend is closed source, but the SDKs and instrumentation packages are MIT-licensed on GitHub.

The product solves a specific pain: most LLM observability tools (Langfuse, LangSmith, Arize) only see the model layer, while general APM tools (Datadog, New Relic) struggle to display GenAI spans usefully. Logfire is one of the few platforms that does both well — it traces your entire stack (HTTP, DB, queues, background jobs) and renders LLM calls as readable conversation panels with token usage, latency and cost.

logfire.configure() plus a one-line auto-instrument for FastAPI, SQLAlchemy, HTTPX, Celery, Redis, AsyncPG and ~40 other libraries gets you full traces in under five minutes.

Reception inside the Python community has been unusually warm. On Hacker News, the original launch thread sat near the top of the front page for a full day, with senior Python engineers calling out the SQL interface and OTel compatibility as long-overdue. On Reddit's r/Python and r/LocalLLaMA, the recurring praise is twofold: setup is genuinely a one-liner for FastAPI / Pydantic AI users, and the dashboard does not bury you in pre-built widgets you cannot edit.

The most common complaint, repeated on Reddit and in the GitHub issue tracker, is that non-Python ecosystems (Node, Go, Rust) feel like second-class citizens — the JS/TS SDK is solid but documentation lags Python by months. A second, fairer complaint is that the closed-source backend means you cannot self-host, which is a hard pass for some regulated teams.

Pydantic restructured pricing effective , with the same headline $2 per million records overage rate but a clearer team tier. The free Personal plan remains generous enough that most solo developers will never pay.

| Plan | Price | Included Records | Seats / Projects |

|---|---|---|---|

| Personal | $0/month | 10M records, 30-day retention | 1 seat / 3 projects |

| Team | $49/month | 10M records + $2/M overage | 5 seats / 5 projects |

| Growth | $249/month | 10M records + $2/M overage | Unlimited seats and projects |

| Enterprise | Contact sales | Self-host, custom retention, SSO | Custom |

Pydantic publicly claims its own pricing comparison: at 5 users and 50M spans per month, Logfire is 8× cheaper than Arize, 27× cheaper than Langfuse, and 40× cheaper than LangSmith. We have not independently verified those exact multiples, but the per-record price is genuinely below the rest of the market.

Best for: Python teams shipping FastAPI, Django or async services to production; anyone using Pydantic AI, Vercel AI SDK or LangChain who wants traces and LLM calls in one view; small startups who would rather pay $0–49 a month than negotiate Datadog contracts.

Not ideal for: Polyglot teams whose primary language is Go or Java (the Python ergonomics are the moat); regulated organisations that must self-host their telemetry plane; teams already invested in Datadog or Honeycomb who are happy with what they have.

Pros:

Cons:

If Logfire does not fit, the closest alternatives are Langfuse (open-source, self-hostable, LLM-only focus), LangSmith (deeper LangChain integration, more expensive), and Arize (heavier on ML ops and evals, but pricier at scale). For pure APM without the AI angle, SigNoz remains a solid open-source pick — see our SigNoz review.

For any Python team that is even occasionally calling an LLM in production, the answer is yes — and the free tier means there is essentially no reason not to try it. The combination of OpenTelemetry compatibility, SQL queries, and a UI built by people who clearly use it themselves makes Logfire feel like a tool that respects developers. We are confident in the 88/100 rating; the points it loses are for the closed-source backend and the fact that non-Python ecosystems are still catching up.

Arm Launches Performix — Free AI-Agent Toolkit With MCP (Apr 28, 2026)

Arm announced Performix on April 28, 2026 — a free, extensible performance-analysis toolkit for AI agents on Arm-based cloud infrastructure, with a built-in MCP server that plugs into GitHub Copilot, Codex, Gemini and Kiro. Microsoft, MongoDB, Redis and SAP are launch partners.

May 3, 2026

OpenAI Brings GPT-5.5 and Codex to Amazon Bedrock — One Day After Microsoft Exclusivity Ends (April 28, 2026)

AWS launched a limited preview of GPT-5.5, Codex and Bedrock Managed Agents one day after Microsoft's OpenAI API exclusivity officially ended.

May 3, 2026

NIST CAISI Evaluation Lands: DeepSeek V4 Pro Trails U.S. Frontier by 8 Months but Wins on Cost (May 1, 2026)

NIST's Center for AI Standards and Innovation published its evaluation of DeepSeek V4 Pro on May 1, 2026, finding the open-weight Chinese flagship lags U.S. frontier models like GPT-5.5 and Claude Opus 4.6 by roughly eight months — but undercuts GPT-5.4 mini on cost across most benchmarks.

May 3, 2026

Is this product worth it?

Built With

Compare with other tools

Open Comparison Tool →