AI Tools

AI ToolsAider

AI pair programming in your terminal—free, open-source, any LLM

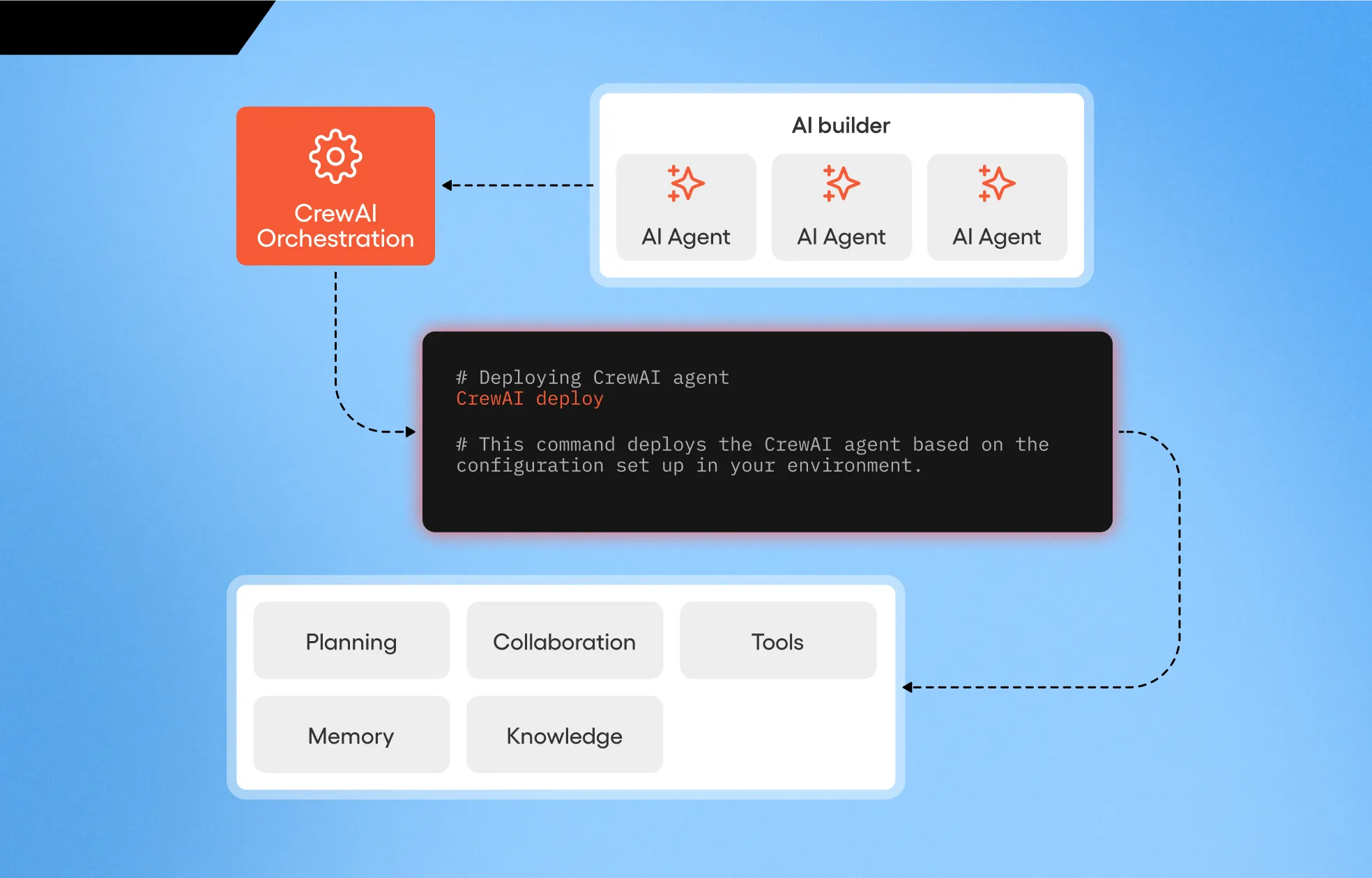

CrewAI is the open-source multi-agent framework that lets developers spin up role-based teams of LLM agents in minutes. With 50,800+ GitHub stars and a hosted Enterprise platform, it has become the default choice for production agent orchestration in 2026.

CrewAI is an open-source Python framework for orchestrating role-playing, autonomous AI agents — and the commercial Enterprise platform built on top of it. We rate it 84/100: the cleanest, fastest path from "prompt" to "production multi-agent system" if you can stomach a smaller ecosystem and some telemetry friction.

CrewAI is a framework for building multi-agent AI workflows where each agent has a role, a goal, and a backstory, and tasks are assigned to agents inside a "crew." It was created by João Moura — formerly Director of AI Engineering at Clearbit (acquired by HubSpot) — who released the initial open-source version in and formally launched the company in January 2024 with COO Rob Bailey.

The repository at crewAIInc/crewAI has reached 50,800+ stars and 7,000+ forks, and the latest release v1.14.4 shipped on . CrewAI is licensed under MIT, and according to the company it now powers 1.4 billion agentic executions across roughly 60% of the Fortune 500. In October 2024 the company announced $18M in total funding, including a Series A led by Insight Partners and an inception round from boldstart ventures.

Sentiment is mixed-positive. On r/LangChain and r/AI_Agents, the most upvoted threads praise CrewAI for being "the easiest framework to actually finish an agent project," with the role/goal/backstory abstraction repeatedly cited as the killer feature. The recent removal of the LangChain dependency in v1.x — once the #1 complaint — has further warmed reception on Hacker News.

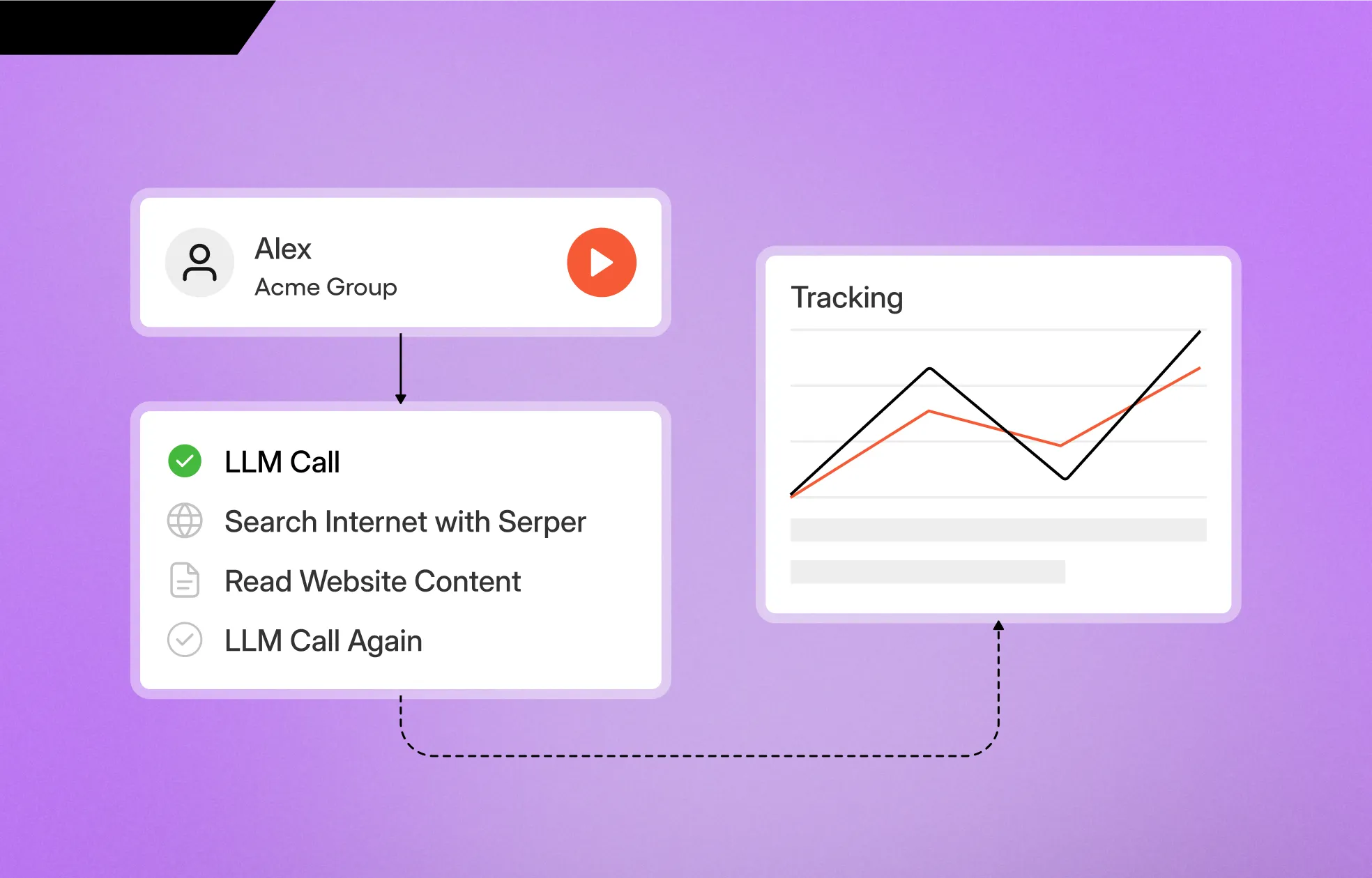

The recurring criticisms are worth taking seriously. A widely-cited Medium post by Ondřej Popelka notes that observability, speed and token costs become real problems at scale without bolt-on tooling. Several developers on Reddit have flagged that CrewAI's telemetry collection cannot easily be disabled, which is a non-starter in regulated environments. And while the role metaphor is elegant, a few users on Hacker News found it "too rigid" for tasks like "update this library" where the work decomposes into many hidden steps.

| Plan | Price | Key Limits |

|---|---|---|

| Open-source framework | $0 / forever | MIT license, no usage limits, you pay only for your own LLM calls |

| AMP Free | $0 / month | 50 executions/month, 1 deployed crew, 1 seat |

| AMP Basic | $99 / month | Hosted dashboard, increased executions, suited to small teams |

| AMP Enterprise | Custom | 10,000 executions/month, up to 50 crews, SOC2, SSO, PII masking, SLAs |

The open-source framework is genuinely free and has no commercial restrictions. The paid AMP tiers are execution-based — one crew run is one execution regardless of how many agents or tasks it spans. For most indie projects, the free framework on your own infrastructure is the right starting point; AMP only earns its keep when you need hosted observability, role-based access control and SOC2 compliance.

Best for: Python developers and small AI teams building production agent workflows where the work naturally decomposes into team-shaped roles — research-and-write pipelines, customer-support triage, sales-prospecting bots, internal RAG operators. Enterprises that need a hosted control plane without building one from scratch will find AMP a faster path than rolling their own on top of LangGraph.

Not ideal for: Teams already invested in Langfuse + custom orchestration, JavaScript-first stacks (CrewAI is Python-first), or projects where the agent graph is highly stateful and non-linear — Mastra or LangGraph remain the better fit there.

Pros:

Cons:

The closest direct alternatives are LangGraph (more flexible graph-based orchestration, steeper curve), AutoGen by Microsoft (research-heavier, conversational paradigm), and Mastra (TypeScript-first agent framework — see our Mastra review). For pure observability without owning orchestration, Langfuse remains the best companion regardless of which framework you choose.

Yes — if your problem actually looks like a team. CrewAI's role-based abstraction is the fastest way we have seen to go from "prompt" to "working multi-agent pipeline," and the open-source framework is free for any commercial use. The 84/100 reflects how well it executes on its core thesis, with deductions for the opaque telemetry, the Python-only SDK, and the still-maturing tool ecosystem versus LangChain. For most teams in 2026 looking at multi-agent orchestration, this is the default starting point.

ReFiBuy Raises $13.6M Seed to Help Brands Get Recommended by AI Shopping Agents (May 5, 2026)

ReFiBuy, the Raleigh-based agentic commerce platform from ChannelAdvisor founder Scot Wingo, closed an oversubscribed $13.6M seed led by NewRoad Capital Partners on May 5, 2026 — betting that the next billion-dollar e-commerce moat is being chosen by ChatGPT, Claude and Perplexity.

May 7, 2026

OpenAI Replaces ChatGPT's Default Model With GPT-5.5 Instant — 52.5% Fewer Hallucinations, 30% Shorter Answers (May 5, 2026)

OpenAI on May 5 swapped GPT-5.3 Instant for the new GPT-5.5 Instant as ChatGPT's default model, claiming 52.5% fewer hallucinated claims on high-stakes prompts and 30% more concise answers. The model also rolls into the API as chat-latest and adds personalization from Gmail and past chats for Plus and Pro web users.

May 7, 2026

Anthropic Ships 10 Claude Finance Agents With Microsoft 365 + Moody's MCP App (May 5, 2026)

Anthropic released 10 ready-to-run Claude agents for financial services on May 5, 2026 -- pitchbooks, KYC, month-end close and more -- with a Microsoft 365 integration, a Moody's MCP app, and Claude Opus 4.7 topping Vals AI's Finance Agent benchmark.

May 7, 2026

Is this product worth it?

Built With

Compare with other tools