AI Tools

AI ToolsAider

AI pair programming in your terminal—free, open-source, any LLM

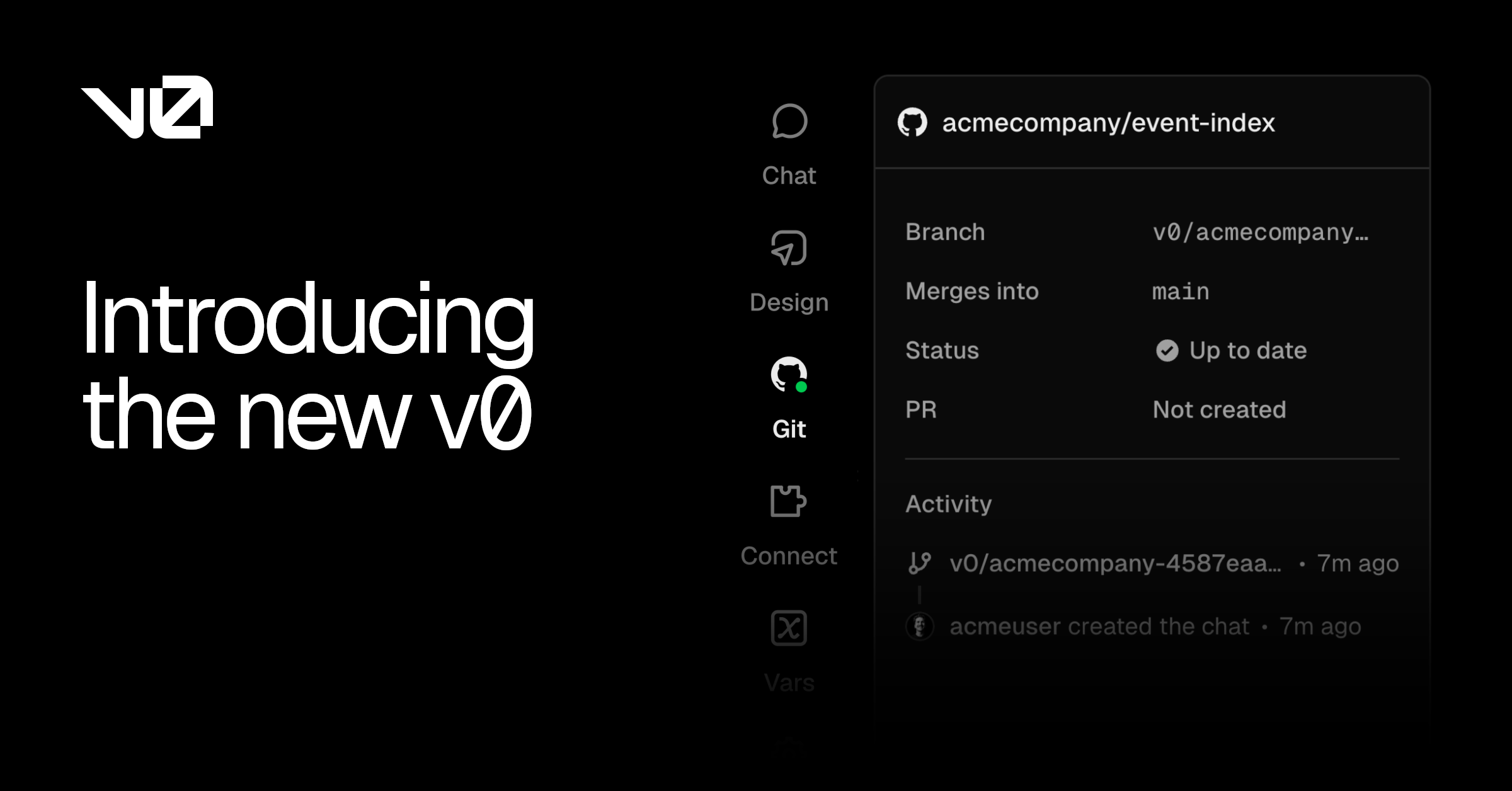

v0 by Vercel turns natural-language prompts into production-ready Next.js, React, and Tailwind code with one-click deploys. We rate it 83/100 — essential for the Vercel ecosystem, restrictive outside it.

v0 by Vercel is a chat-based AI app builder that turns natural-language prompts into production-ready React, Next.js, and Tailwind CSS code, with one-click deploys back to Vercel. We rate it 83/100 — a near-essential prototyping tool for anyone living in the Next.js ecosystem, with sharp limits once you push past UI generation into messy backend logic.

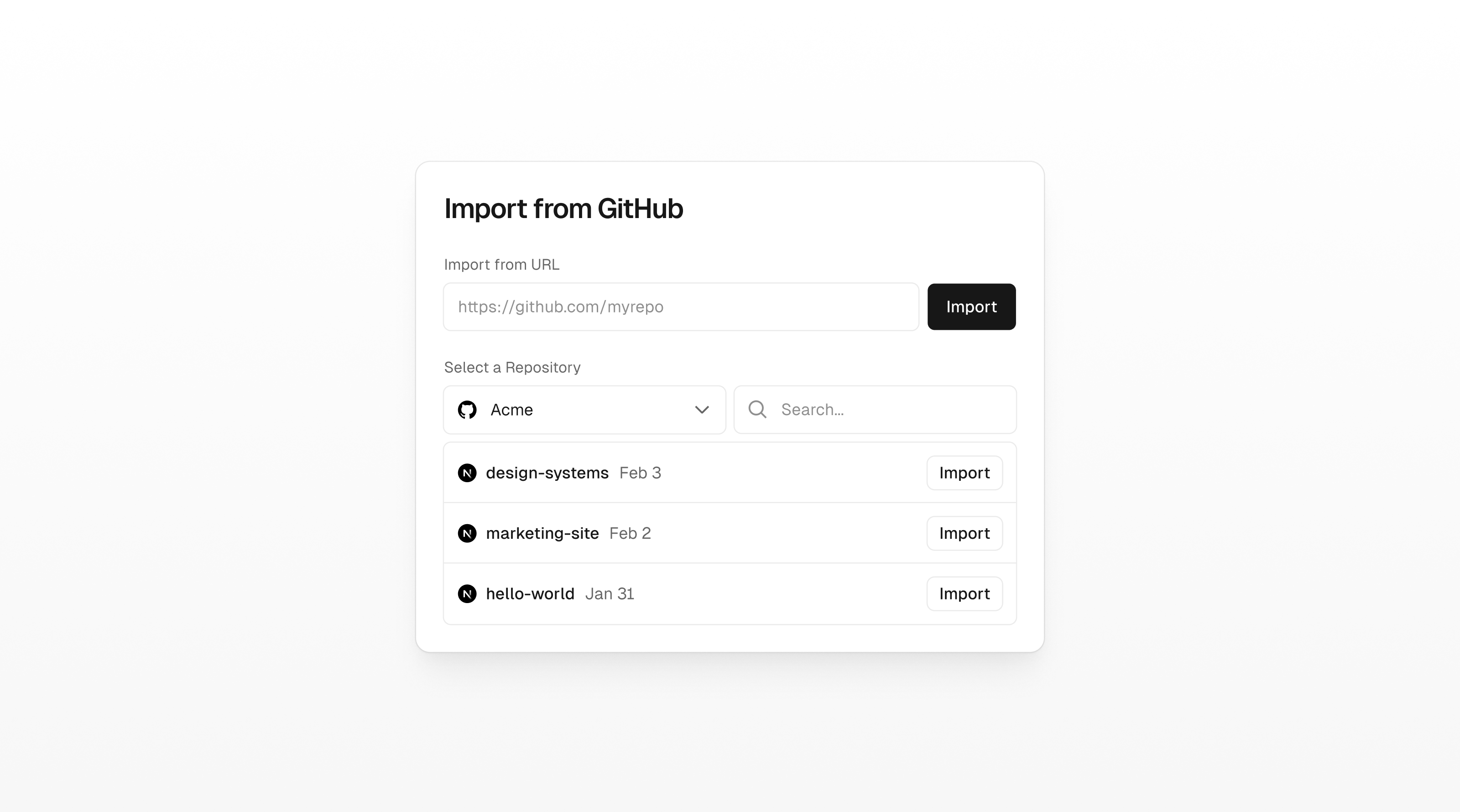

v0 launched in beta on as Vercel's experimental "generative UI" playground at v0.dev. In the product graduated to its current home, v0.app, and shifted from a single-prompt component generator to a full conversational app builder with GitHub sync, Figma import, environment variables, and a built-in agent that can iterate on its own diff. As of Vercel reports more than six million developers have signed up.

The pitch is narrow and clear: instead of fighting a generic LLM to produce React that actually compiles, v0 is fine-tuned on Vercel's own stack — Next.js App Router, React Server Components, Tailwind, and shadcn/ui — and ships its output directly into a working Vercel deployment. That tight loop is what separates it from broader competitors like Cursor, Bolt.new, and Lovable.

Sentiment is broadly positive but uneven. On Hacker News and r/nextjs the dominant praise is for the quality of the generated code itself — most threads agree that v0's output drops into a real Next.js project with fewer compile errors than ChatGPT or Cursor's free-form code suggestions. Reddit's r/webdev frequently calls it "the only AI builder that doesn't fight your linter."

The recurring complaints are equally consistent. On the official Vercel community forum a March 2026 thread titled "Is v0 still a reliable AI solution for developers?" gathered users reporting that conversations occasionally vanish, deployments get wiped, and credits drain without producing usable output. Several Reddit comments call out the token-based pricing migration as confusing — generations that used to be predictable now vary in cost depending on how big the project context has grown. Hallucinated imports from non-existent shadcn components also come up in nearly every long thread.

v0 runs on a credit/token model layered on five tiers. Every paid plan can top up credits separately if the monthly allotment runs out.

| Plan | Price | Key Limits |

|---|---|---|

| Free | $0/month | $5 in monthly credits, v0-1.5-md model, GitHub sync, Vercel deploys |

| Premium | $20/month | $20 in credits, Figma import, higher upload limits, v0 API access |

| Team | $30/user/month | Shared credits, shared workspace, centralized billing |

| Business | $100/user/month | Higher rate limits, advanced security controls |

| Enterprise | Custom | SSO, SAML, priority access, dedicated support |

Best for: Solo founders and small teams already shipping on Next.js + Vercel who want to skip the boilerplate phase of a new project, designers who need a working React frontend from a Figma file, and product managers who want clickable prototypes without filing a Jira ticket.

Not ideal for: Teams on non-Next.js stacks (Remix, Astro, Svelte, Vue), engineers building data-heavy backends where the bottleneck is logic rather than UI, and anyone who needs deterministic per-message pricing — the token-based model rewards short prompts and small projects.

Pros:

Cons:

Three serious alternatives are worth comparing. Bolt.new from StackBlitz runs a full WebContainer in the browser and supports more frameworks but generates less idiomatic code. Lovable goes further toward end-to-end app building, including Supabase integration, but its output is harder to take ownership of after generation. Cursor isn't a generator but an AI-native editor — better for editing an existing codebase than starting one from a blank prompt.

If your day job is shipping Next.js apps on Vercel, v0 is the obvious answer at 83/100 — the free tier is enough to evaluate, Premium pays for itself the first time you skip three hours of shadcn boilerplate, and the GitHub PR workflow respects the way real teams ship. If you're outside that ecosystem, the lock-in is real and a more framework-agnostic tool like Bolt.new is the better starting point. Reliability complaints knock it out of the 90s, but for the prototyping job it actually claims to do, nothing else lands code as cleanly into a production codebase.

ServiceNow and Accenture Launch Forward Deployed Engineering Program to Scale Agentic AI in the Enterprise (May 6, 2026)

At Knowledge 2026, ServiceNow and Accenture announced a joint forward deployed engineering program that drops co-located engineer pods into customer environments to ship agentic AI workflows natively on the ServiceNow AI Platform — with access to 300+ pre-built agent skills and the AI Control Tower as the governance backbone.

May 7, 2026

ReFiBuy Raises $13.6M Seed to Help Brands Get Recommended by AI Shopping Agents (May 5, 2026)

ReFiBuy, the Raleigh-based agentic commerce platform from ChannelAdvisor founder Scot Wingo, closed an oversubscribed $13.6M seed led by NewRoad Capital Partners on May 5, 2026 — betting that the next billion-dollar e-commerce moat is being chosen by ChatGPT, Claude and Perplexity.

May 7, 2026

OpenAI Replaces ChatGPT's Default Model With GPT-5.5 Instant — 52.5% Fewer Hallucinations, 30% Shorter Answers (May 5, 2026)

OpenAI on May 5 swapped GPT-5.3 Instant for the new GPT-5.5 Instant as ChatGPT's default model, claiming 52.5% fewer hallucinated claims on high-stakes prompts and 30% more concise answers. The model also rolls into the API as chat-latest and adds personalization from Gmail and past chats for Plus and Pro web users.

May 7, 2026

Is this product worth it?

Built With

Compare with other tools

Open Comparison Tool →